Vibe Coding, QWERTY, and US Healthcare - or: The Future of Software Engineering?

Posted on 2026-03-18 20:45 by Timo Bingmann at Permlink with 0 Comments. Tags: #ai #coding

Summary (TL;DR)

Why is changing existing code with AI so much harder than writing new code?

Vibe coding feels like using a new found super power—no prior experience needed, no constraints, pure speed, fast results. But changing existing code with AI is so much harder. Why?

My thesis: code is pure dependencies.

Every function call, every shared data structure is an edge in a dependency graph. And those edges exhibit path dependency: early decisions dig grooves the system can't escape—just like the QWERTY keyboard layout (designed in 1878 for mechanical typewriters) and US employer-sponsored healthcare (born from WWII wage controls). New code has no dependencies: that's why it's easy. Existing code by its very nature is a tangled dependency graph, and that's why it's so much harder to change, and AI agents can only help us so far.

The job of professional software developers has shifted. We no longer write code—we create guardrails (interfaces, contracts, tests, documentation) that keep AI agents on track and reduce path dependency so we don't get stuck. These guardrails must be validated by other mechanisms than AIs. LLMs, or AI agents, today, cannot be relied on to validate contracts. The antidotes to the tangled mess of dependencies are well-known software development concepts, and in this article we highlight how to adapt these into the age of AI coding:

- Modularity – clear boundaries with agent-readable, compiler-checkable contracts

- Exchangeable implementations – validate interfaces by building multiple implementations against them

- Planned interface evolution – extend without breaking (and let agents update required parameters across the changeable codebase)

- Testing – essential but double-edged; every test adds a dependency edge, so favor integration tests over fine-grained unit tests

- Documentation – LLMs are incredibly fast readers; the importance of good docs has skyrocketed

- Larger repositories – enable atomic cross-module changes

- External dependency tracking – the hardest problem, requiring deprecation protocols and clear timelines

These are some of the patterns that make AI coding work on large, real, existing codebases—not just greenfield toys. The individual developer is faster, but the organizational machinery around software development hasn't yet caught up.

To close this gap, we need to understand why existing code resists change so stubbornly, and what we can do about it. The answer starts with path dependency—a force that shapes everything from QWERTY keyboards to healthcare systems—and leads to a practical toolkit for software engineering in the age of AI.

Table of Contents

- The Age of AI Coding

- What is Path Dependency?

- Code Is Pure Dependencies

- Guardrails for AI Coding

- Conclusion

1. The Age of AI Coding

In the past year, software development has changed dramatically. AI coding tools—Claude Code, Cursor, and others—have fundamentally shifted how we write software. This article is based on my experience across many years of software development, and on extensive use of AI coding agents since mid-2025. It isn't drawn from any software engineering textbook—these observations and principles have proven true in practice, refined through daily work with these tools on both personal and professional projects. They are not meant to be an exhaustive list of software engineering paradigms.

In 2025, source code's cost structure has changed:

- The cost of writing new code has dramatically decreased. What used to take a developer days or weeks of careful implementation can now be generated in minutes. But this is specifically about new code—greenfield work, new features, new modules.

- The value of good interfaces and system designs has risen dramatically. When code is cheap to produce, the bottleneck shifts to the design decisions that determine how that code fits together. Getting the architecture right matters more than ever, because the cost of producing code in the wrong direction has dropped to nearly zero—making it dangerously easy to build the wrong thing quickly.

- We can now globally refactor very quickly, because AI agents will tirelessly apply mechanical changes across larger codebases—changes that would take humans much longer. These would just never be done in a cost-constrained company, leading to continued accumulation of tech debt. An agent can rename a function, update all call sites, adjust tests, and fix documentation across thousands of files without losing focus or making the kinds of careless mistakes that humans make on repetitive tasks.

- Code maintenance cost is still the same. Running the code, checking that it's still good, all the DevOps maintenance work—none of that has been accelerated. The operational burden of software—deployments, monitoring, incident response, infrastructure management—remains fundamentally a human-paced activity. It's really the creation of new code that has seen the dramatic cost reduction.

The AI impact is visible everywhere—we all use it, every day, for various tasks. But it's not always visible at the organizational level where scopes and project velocity are concerned. The individual developer is faster, but the organizational machinery around software development hasn't yet caught up.

We're not really writing code anymore. We're instructing agents to write the code for us. This article is about how we can do that better. It's not about any particular AI coding tool—not a review of Claude Code versus Cursor versus Gemini. Instead, it's about how we structure our code so that the task of AI coding becomes easier and more effective within it. The tools will keep evolving, but the principles of good code structure for AI collaboration will endure.

To get there, let's take an excursion into a completely different realm: systems theory.

2. What is Path Dependency?

Path dependency is a very, very general property of complex, dynamic systems. It's the property that some systems, when you repeatedly make decisions within them, produce grooves. These decisions compound over time—each one makes a particular path slightly more worn, slightly more natural to follow. And once these grooves have gotten so deep, switching paths between them becomes much more difficult. The system settles into a trajectory that is increasingly costly to escape.

On the left of the picture above, the dirt roads have shallow grooves—one can still switch paths pretty easily, cut across to a different road, change direction. But once you pave streets and build cities around them, once you have an entire network of roads with buildings and infrastructure built along specific routes, switching becomes much harder. The cost of changing course grows with every additional structure built along the existing path.

This is a general system property—once you start noticing it, you see it everywhere. The roads in your city look the way they do because someone decided their width and direction a long time ago. Power lines in the United States are commonly above ground on wooden poles because, when the electrical grid was first built, the plastic insulation needed to bury cables underground simply didn't exist yet; and now all the infrastructure is there for building electrical poles quickly and efficiently.

And as we'll see, this property has everything to do with source code.

QWERTY: The Classic Example

The best-known example of path dependency is the QWERTY keyboard layout—the layout most of us type on every day. It's a well-known example precisely because the chain of transmission is so clear and so absurd.

It became popular in 1878 through the Remington typewriter. The design decision was to lay out the keys such that the little mechanical hammers wouldn't get tangled up too much when typing English text. This was a purely mechanical optimization—arranging letters so that commonly used pairs were physically separated, preventing the metal arms from jamming against each other. A concept invented for something completely different from what we use it for today.

The same layout was transferred through each generation of technology, even as the original reason for it disappeared. It persisted to the electric typewriter, which no longer had any little hammers—the IBM Selectric used a spinning ball element instead. The mechanical constraint was gone, but the layout remained unchanged.

It persists on computer keyboards, where there are no mechanical process induced by key strokes at all. The layout that was optimized for preventing mechanical jams in 1878 is still the default on devices that have zero moving parts.

This keyboard layout persisted because of the path dependency of muscle memory. Each generation of this technology was first adopted by the existing typists, who of course expected the familiar layout to be the same. They had trained their fingers to find specific keys in specific positions, and any new device that wanted adoption had to accommodate those expectations. At no point was it feasible to switch keyboard layouts entirely—the installed base of trained typists was always too large.

Yes, alternative layouts exist—Dvorak, NEO, and others—and enthusiasts have learned them. Studies have shown some of these layouts to be more efficient for typing speed and ergonomics. I tried to switch, and I failed. I'm still using QWERTY, despite it being a known suboptimal layout. That's the power of path dependency—even when one knows the current path is inferior, the switching cost is simply too high.

US Healthcare: A Path-Dependent System

US employer-sponsored healthcare is a textbook case of path dependency, where two events in the 1930s and 1940s set the trajectory that the entire system still follows today. Note that I'm definitely not an expert of the history of healthcare.

In 1935, Roosevelt did not include healthcare as part of the Social Security program. During the Great Depression, there simply wasn't enough political support or money for it. Social Security itself was ambitious enough—adding healthcare on top was a bridge too far.

Then the Second World War happened. During the war, employers couldn't pay higher wages because of strict wage and price controls. So they offered health benefits instead to attract workers. These benefits are still not taxed as wages today—because they were originally designed to circumvent wage controls.

Multiple presidents tried to add some form of universal healthcare afterward, but the path dependency was too strong—employer-sponsored insurance had become deeply entrenched, with an entire industry built around it.

Path dependency is the property that temporally remote events can have lasting effects on a system's outcome, making it progressively harder to move from one path to another. The seminal academic papers on this topic are:

Paul A. David: Clio and the Economics of QWERTY (1985)

A sequence where temporally remote events—including chance—can have lasting effects on the outcome, so the process need not "wash out" history (i.e., it can be non-ergodic).

W. Brian Arthur: Competing Technologies, Increasing Returns, and Lock-In by Historical Events (1989)

A process where increasing returns to adoption amplify small early advantages, so one option can "lock in" while alternatives become progressively harder to dislodge.

Systems Without Strong Path Dependency

Those were all negative examples. But systems also exist that exhibit less path dependency. What makes them different? Can we identify the properties that make some systems easier to change, easier to switch within?

Cooking ingredients. A recipe call for "flour" and one can switch out any brand of flour. You can often even switch the flour type to almond flour—yes, cooking purists will say it's not the same because almond flour doesn't have gluten, and yes, there are definitely differences in quality and taste. But the switching cost is orders of magnitude lower than, say, changing a health insurance system. The interface is simple: the recipe asks for a quantity of a type of ingredient, and many things can satisfy that requirement.

The car driving interface. One can rent a car almost anywhere on the planet and find the same interface: a steering wheel, a gas pedal, a brake, all in the same places. Some have a clutch pedal, some are automatic, some are electric—but none of them have a joystick control or some bizarre racing interface. This remarkable standardization across manufacturers, countries, and decades means that the basic skill of driving transfers from one car to another (with some getting used to the extra buttons and lights). The interface is standardized, and that standardization is what reduces path dependency.

Career knowledge. To some extent, you can switch employers and reuse your knowledge at the new job. Your understanding of algorithms, system design, and programming languages transfers across organizations. Knowledge is a less path-dependent system—not zero, of course, since domain expertise can be very specific, but far less locked-in than physical infrastructure.

Plain text documents. You can edit them with any editor—Vim, Emacs, VS Code, Notepad, or anything else. This is far less lock-in than proprietary binary formats that require specific software to open. The format itself is the interface, and plain text is the most universal format there is.

Money. Perhaps the least path-dependent thing of all. One can sell something, turn it into money, and then buy something entirely different with that money. Money is the great liquidator between all kinds of things—it's a universal interface for exchanging value, which is precisely why it reduces path dependency so effectively.

These systems share a common property: they have well-defined, standardized interfaces that reduce switching costs. The recipe defines what it needs but not which specific product to buy. The car defines where the controls are but not how the engine works internally. Money defines a unit of value but not what that value must be exchanged for. These same principles apply to writing source code—or rather, to having AI write source code.

3. Code Is Pure Dependencies

My central thesis is this: code is pure dependencies. And these dependencies have path dependency properties. Hence we need to think about code a complex, dynamic, path dependent system, which now is written by robots.

f(x, y) = 2*x + 3*y r = f(a, b)

Consider the simplest example: a function f with two parameters, x and y, that computes something. This function must be used somewhere—otherwise the code linter will flag it as unused code and tell you to remove it. The moment it's used, even in this simplest case, a dependency exists between the definition and the call site. Two lines of code are now coupled: the line where f is defined and the line where f is called.

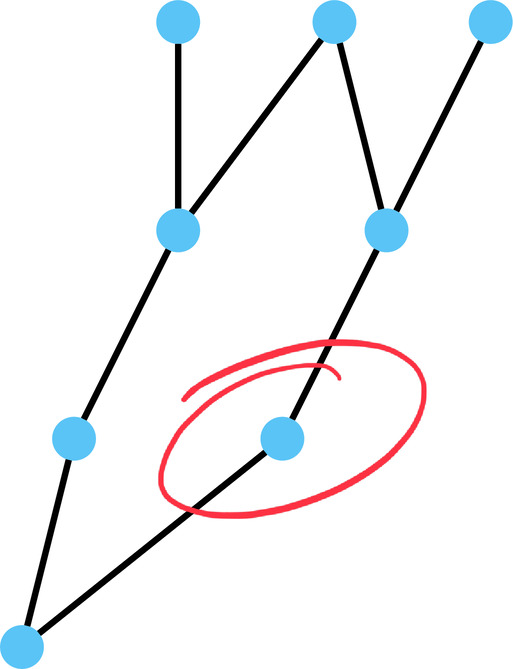

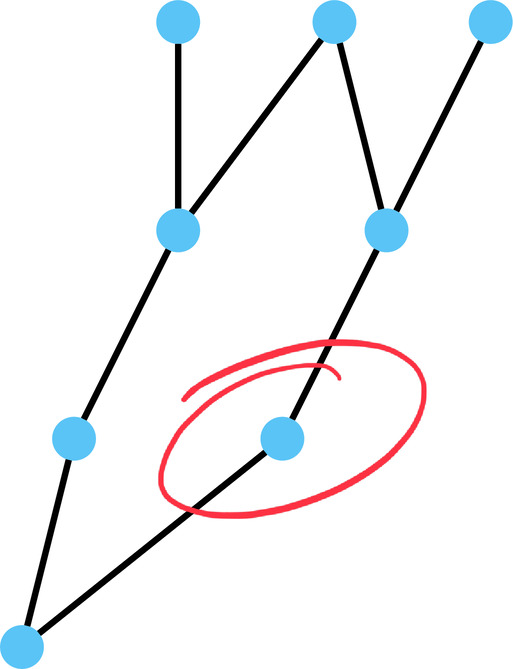

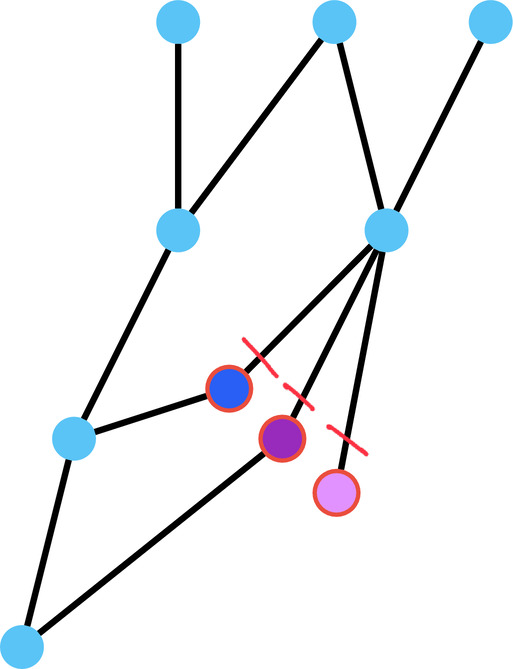

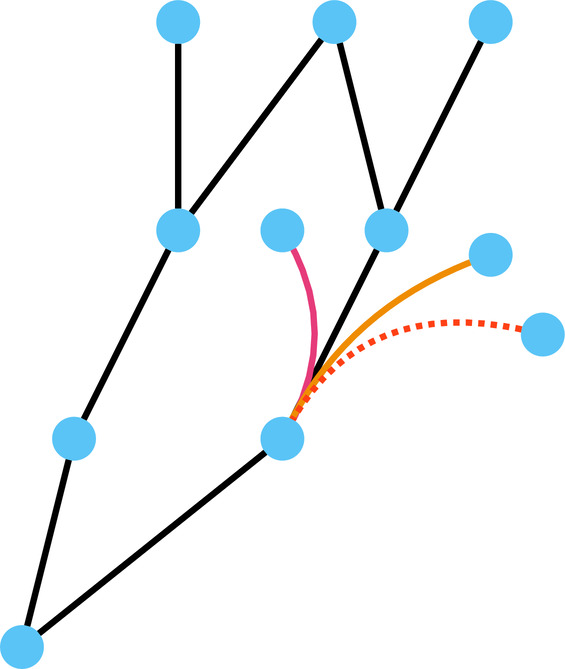

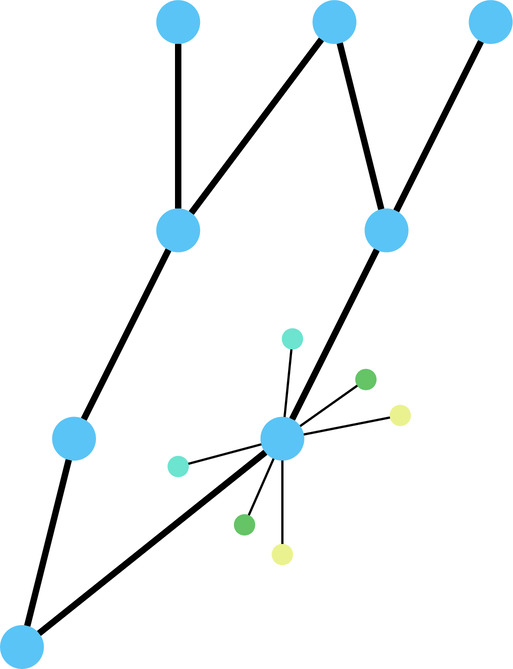

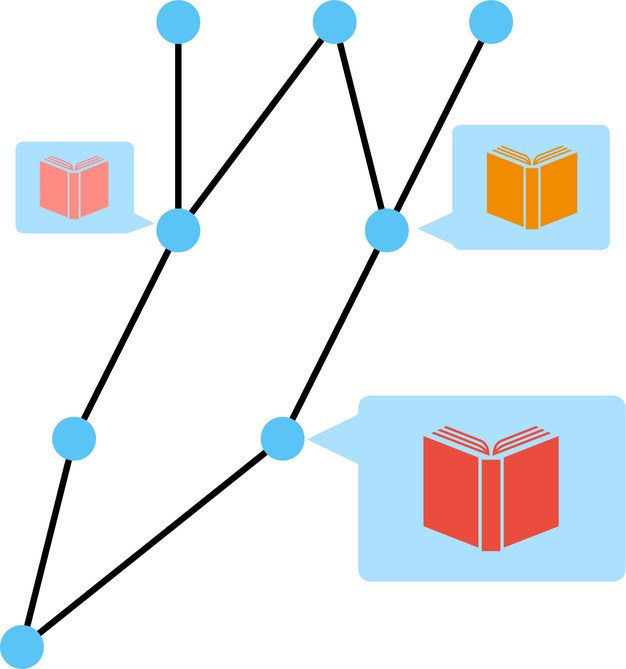

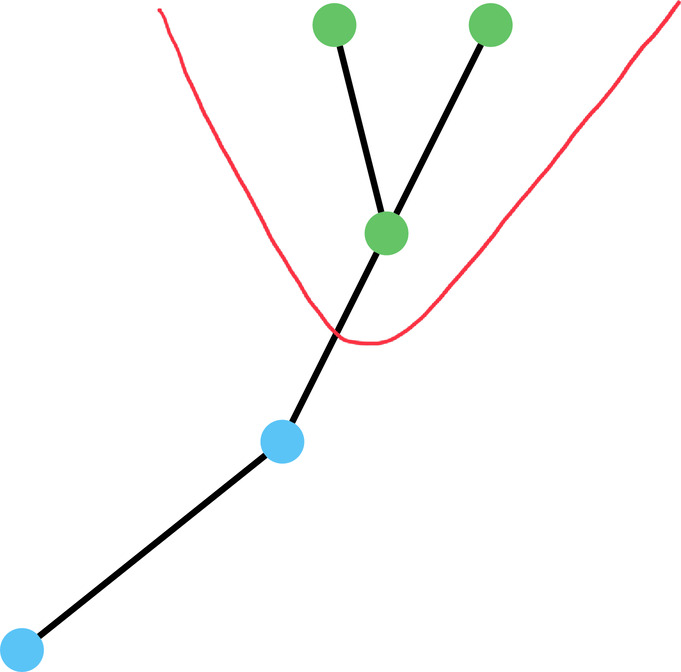

I like to picture these dependencies as a graph, where each dot is a piece of code and the lines between them are the dependency relationships. Graphs are pure adjacency data structures—they're perfect for visualizing this network of connections that every codebase inevitably becomes.

Now suppose I want to add a third parameter to f.

All callers must change—they have to provide this new parameter. Yes, one can add a parameter with a default value, and that avoids breaking existing callers—and that is precisely one of the ways to mitigate path dependency. That solution can be rephrased as adding a new function f3 with three parameters and rewriting the old f to call the new one. So the result is slightly different with default parameters: it adds a new function while keeping the old one intact. But if we really want to replace the old one, we have to update all of the callers with the third parameter. Either way, the dependency exists, and managing it is the core challenge.

What happens if function f changes the result it computes—not the parameters, but the output? Do the callers have to know about this?

The answer, as so often: it depends. It depends on the semantics of the result and how callers use it. Is the result supposed to be something very specific, or is it reused in a different context where the exact value doesn't matter? There's no universal answer, but the dependency still holds—the link between definition and call site remains, and changes can ripple through in ways that are hard to predict.

This brings us to a question that anyone who has used AI coding tools has encountered: why is it easy to vibe-code something new, while changing existing code is so much harder? The answer lies in these dependencies. When we vibe-code something new, we're writing on a blank slate—there are no existing dependencies to respect. But when we change existing code, every line that calls another line produces a dependency, and if these dependencies get tangled, that's when we get stuck. The AI agent implicitly makes decisions on how to structure the code, and it produces grooves in the codebase—dependency paths. If these get tangled too much, that's when vibe coding fails.

The goal is to reduce the path dependency of the source code we produce, so that we—and our AI agents—don't land in a tangled mess.

Vibe Coding the Inner Nodes

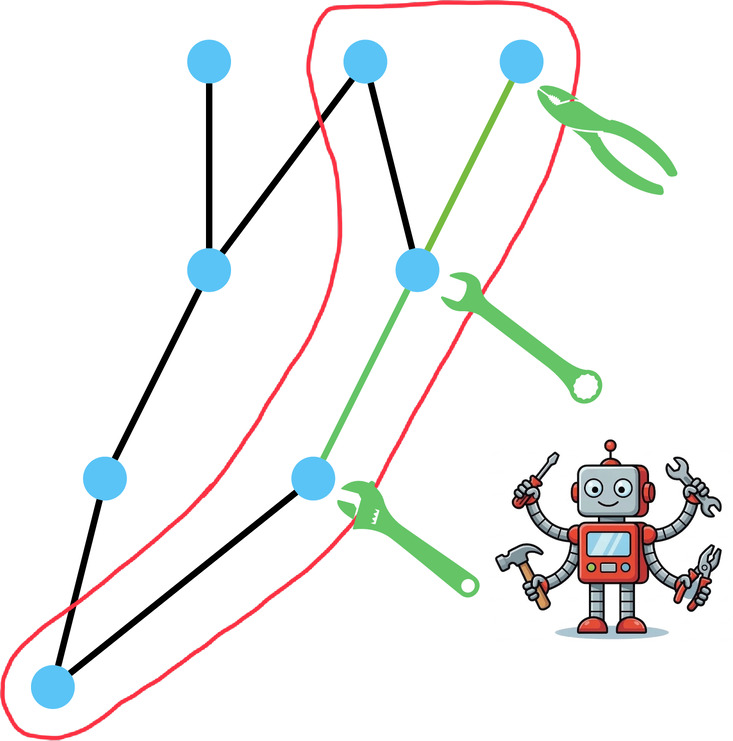

This line of thinking started when I watched Anthropic's "Vibe Coding in Prod" video from May 2025. The presenter showed a tree of source code and advised: use vibe coding to create leaf nodes—individual features, utilities, isolated components—while the core architecture must still be understood by humans. The caveat of vibe coding, he noted, is tech debt. This is sound advice, and it describes the current state of practice for most teams using AI coding tools today.

But this article isn't about leaf nodes. It's about the next step: vibe coding the inner nodes. The goal is to vibe-code the inner nodes in production systems. This is harder—much harder. Leaf nodes are relatively independent; you can throw one away and rewrite it without affecting much else. Inner nodes, by contrast, are the core, interdependent parts of a system—the modules that many other modules depend on. Changing them means changing the contracts that hold the system together, and that's where path dependency bites hardest. For that, we need a toolkit.

4. Guardrails for AI Coding

Here's a blunt statement: humans no longer write code.

Yes, of course, we still write small bits and pieces here and there, but the LLMs are so much better at it. They're so much faster, and they produce better code. Line for line, an AI agent will outpace a human developer in raw code production. Our job has changed.

Our job is now to create guardrails for the AI agents to produce the desired code—to keep them from going off the rails, as it were.

This is why the article has the subtitle "The Future of Software Engineering?"—because our role has shifted from writing code to creating guardrails for the actual code writers, which are now the AI agents. We are no longer the craftspeople hammering out every line; we are the architects defining the constraints within which the code must be produced.

The guardrails at our disposal include:

- Typed languages

- Required parameters

- Tests

- Informal and formal specifications

- Interfaces

These are the tools we use to ensure that the LLMs, when writing code, produce something that fits within the structure we intend, rather than wandering off in an arbitrary direction.

Perhaps the simplest guardrail is the typed parameter struct. Consider this C++ example:

struct Widget { int a; std::string b; }; void consumer(const Widget& w) { // uses w.a, w.b } Widget producer() { return Widget{.a = 42, .b = "hello"}; }

Compare this to the plain function f from the previous example. The previous function had two parameters—let's say x and y—and in this example, I have two attributes in my Widget instead. So what changed? The data passed between producer and consumer now has a defined contract—the Widget structure, which defines types and names.

Here's the key difference from a plain function: when a function takes two parameters, the parameter names are defined locally. That's what a local parameter is—the calling code need not use the same names for the values it passes. But with the Widget struct, both the producer and the consumer must refer to the attributes by the same names—a and b—and this is enforced by the C++ compiler. The producer constructs a Widget using the defined attribute names, and the consumer accesses those same attributes by name.

Yes, this produces even more dependency—the names have to match on both sides. But it produces a codependency that is readable by agents and checkable by the compiler. While I'm creating a tighter link between producer and consumer, I'm creating one that is easier to find, easier to reason about, and easier for an AI agent to work with correctly. If one adds comments explaining what a and b actually mean—and of course, better identifiers than single letters would help too—then one adds semantic meaning on top of the structural contract.

Contrast this with void function2(std::map<std::string, any>& params)—the ultimate flexible interface. Yes, it's widely open, one can pass strings of any type, but it defines no contract at all. There are no named parameters, no defined structure, no types to check. This interface is simply too wide to be useful as a guardrail.

Antidotes to Path Dependency in Code

Based on experience, here is a collection of antidotes to path dependency in code. These are strategies drawn from practical experience, not from any software engineering textbook. These are the strategies most useful for managing path dependency, and each has a particular twist in the AI coding age. All of these are well-known software development and engineering concepts, but all of them now have a slightly different emphasis and importance when AI agents are writing the code:

- Modularity

- defined interfaces and contracts

- exchangeable implementations

- planned interface evolution

- Exchangeable implementations

- fast and good harnesses

- automation

- formal specification

- Testing

- Documentation

- Larger repositories

- with modular build systems

- Tracking external dependencies

Let's go through each.

Modularity

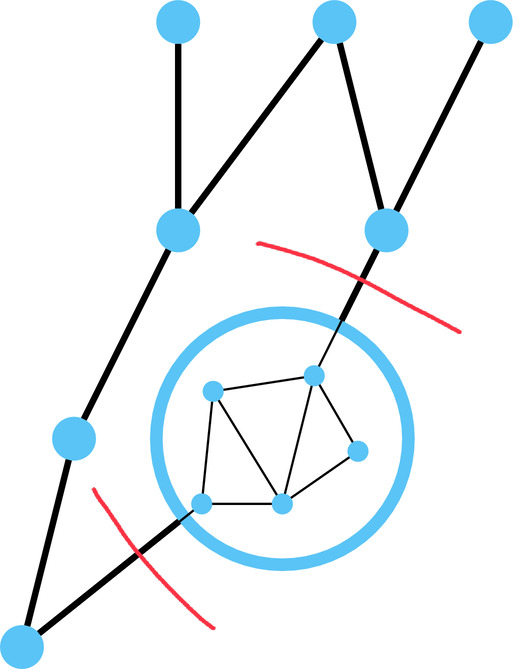

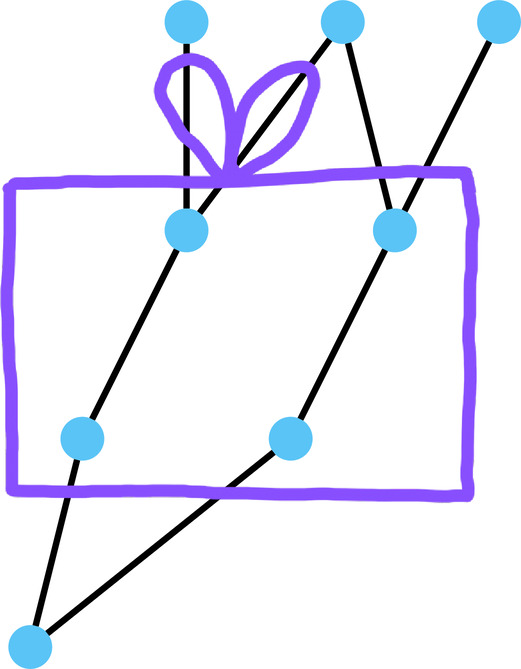

Modularity means grouping interdependent pieces of code into substructures and clearly defining the boundaries between them. You take a group of interdependent components, draw a boundary around them, and define a clean interface to the outside world. In graph terms: take a densely connected subgraph, collapse it into a single node, and define the edges that cross the boundary.

In the AI coding age, these contracts between modules must be readable by agents. If another team—or another AI agent—needs to use your module, you must provide a machine-readable contract that an AI can implement against. The agent on the other side needs to be able to read the interface specification and produce correct code against it, without human intervention explaining what the undocumented conventions are.

The typed parameter struct is the simplest and most effective example: a data structure with named, typed fields, annotated with comments explaining semantics. If the Widget example had comments explaining what a and b actually do—and of course, better identifiers than a and b would be even more valuable—you'd have a contract that is both human-readable and agent-readable. This is one of the best tools we have to produce contracts between various systems and to specify the dependencies between them.

But here's a crucial distinction: while contracts must be readable by LLMs, they must be checkable by automation—not by LLMs. This is one of the most important points to emphasize. LLMs, or AI agents, do not produce truth. They are not truth machines. An LLM is a stochastic guessing machine—a very good one, remarkably capable by now, but by its nature, it only guesses the next token. It cannot produce truth. The compiler, on the other hand, will check that every usage of the Widget data structure accesses only those attributes that are defined. That is truth—that is something the compiler will check for us, deterministically, every single time. The contract must be readable by the LLM so it can generate correct code, but the correctness must be verified by a system that actually produces truth.

Protobuf messages are essentially fancy typed parameter structs with serialization and more. They're contracts between software modules—readable by agents and checkable by tooling. We need to create much more of these kinds of machine-readable, machine-checkable contracts.

A note on classes: When one takes a typed parameter struct and start adding methods—creating a class—what changes? I believe that's actually making the interface more complex. A class hides the data of the typed parameter struct—all of the attributes are declared as private, and the interface to the outside world becomes a set of functions. Those functions have semantic meaning about how the internal state changes: one must call them in certain sequences, certain methods are prerequisites for others, and the behavior depends on the current state. A useful comparison is a car—a car has lots of levers and buttons, and they have to be pressed in some particular order for things to happen correctly. That's more complicated than a typed parameter struct, where one simply accesses the attributes—you can read them and write them, and that's it. Classes can be useful for encapsulation and behavior, but for defining contracts and interfaces between modules, they may be a step further than needed.

Exchangeable Implementations

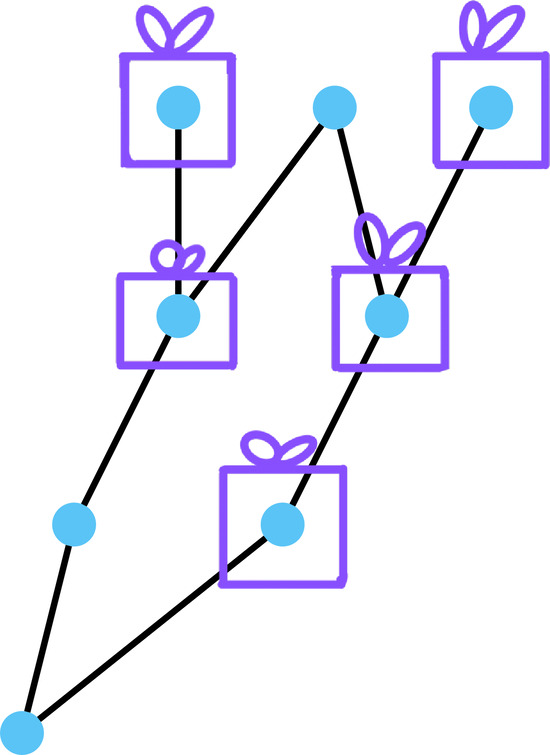

This is an underappreciated tool for validating interfaces. The idea is simple but powerful: if one has a good interface or contract, then you should be able to create multiple exchangeable implementations of it. The existence of multiple implementations that satisfy the same contract is strong evidence that the contract is well-defined.

The Internet RFCs embody this principle beautifully. Before an RFC can become a full standard, it requires two independent implementations that can interoperate. Someone writes a long specification document, and then two different software development teams implement the same thing independently. Only if these two different implementations can speak to each other—can interoperate correctly—can that RFC become a full standard. This requirement validates that the contract written in the RFC is actually well-defined enough to be implemented without ambiguity.

We can apply the same principle in everyday software development:

- Mock implementations that implement only a subset of a contract—for specific values, without an entire database engine behind them, etc. In the AI age, we should be using many more mock implementations, because AI agents can now generate them quickly. An agent can read an interface definition and produce a mock implementation in minutes, giving us a way to validate the interface without building the full system.

- Subclasses implementing the same base class interface are also exchangeable implementations. Every time you have two classes that extend the same abstract base, you've validated that the interface is clear enough to support multiple independent realizations.

- A real-world analogy: the Affordable Care Act's bronze, silver, and gold plans are essentially interfaces to healthcare. They define some standardized interface—what treatment you get for what price, what level of coverage is provided. Different insurers then implement these plans in different ways, and those offering these plans can try to internally push down their costs, creating a competitive landscape. Whether it works perfectly is debatable, but it serves as a fascinating example of a standardized interface with multiple independent implementations—exactly the principle that validates interface quality.

Planned Interface Evolution

Creating one good interface isn't enough—we have to expect that it will change and be extended in the future. And we need to plan for that evolution without breaking the dependencies that we create by having this interface.

Protobuf is a prime example of planned interface evolution: adding fields is safe. You add a field, and existing clients will still be able to talk to the server that now has additional fields. One must figure out what to do with missing fields—the fields that the older client does not provide—and default parameters can help, but they can also make things more complicated. You have to test that the existing old clients still see the same behavior from the server, even after the new fields are added. But fundamentally, the interface can grow without breaking.

Named parameters are another important mechanism and should be used as much as possible. Languages like Python and Scala support named parameters, which define a backwards-compatible interface: you can add new named parameters with default values without breaking the existing callers. One has to make sure that the default values of the added parameters retain the existing behavior, but you can do this without breaking anything. Named parameters also carry more semantic meaning than positional arguments—when you read search(query="shoes", max_results=10, sort_by="relevance", advanced=False), the intent is immediately clear in a way that search("shoes", 10, "relevance", False) is not.

Anti-Examples

- REST API versioning (

/v1/,/v2/,/v3/): once one publishesv1, it is fixed. Yes, one can sneak in new parameters, but that's generally not good style. You will have to supportv1forever, and if you want to create something new, you're going to createv2. And so on. This isn't evolution—it's creating multiple, frozen interfaces. Each version is a complete snapshot that must be maintained indefinitely. func(dict[str, Any]): the ultimate wide-open interface. One can pass key-value parameters of any type—but it doesn't actually define any interface, except that it's a dictionary. There's no naming of parameters, no defined contract like the parameter struct. It's simply too wide to be checkable. Give this to an LLM, and it will start guessing key names—names that might sound right but have nothing to do with the actual schema. Even a JSON schema often only defines structure, not the semantics of the different keys.

AI Agents Change the Calculus: Broad-Scale Forced Refactoring with Required Parameters

With AI agents, we now have an interesting new option. We can add required named parameters and have the agent update all dependencies across the codebase.

Instead of relying on optional defaults—which, while backwards-compatible, accumulate tech debt over time as the number of optional parameters grows—we can make breaking changes and have the agent tirelessly update every call site. The agent will work through every file that calls the modified function and add the required parameter, ensuring consistency everywhere. This means we can create less technical debt than before, because we're no longer forced to choose the path of least resistance (optional parameters with defaults) when the right answer is a required parameter.

Testing in the AI Age

Testing is a general agility unlock. In the AI age, testing becomes even more important, because agents run tests more frequently than humans do—they may run them after every change, often multiple times per iteration. And agents can also create tests, so we will have more of them. The combination of more frequent execution and more tests being written means that testing occupies a larger share of the development cycle than ever before.

But tests are a double-edged sword. Every test adds a dependency: a line of test code that references a line of production code. Every line of code you add that tests some other line adds a dependency edge to the graph, as I showed in the simple examples. Add too many, and you're deepening the grooves of path dependency. You're adding graph links to your dependency graph, which makes changing the insides harder. You're expecting specific behavior, locking it in, and any change to the implementation now requires updating all the tests that depend on that behavior.

The balance between testing coverage and path dependency has always existed, but it matters more now. AI agents will happily create as many test dependencies as you ask for—they'll generate hundreds of unit tests if you let them, each one adding another edge to the dependency graph.

My recommendation: favor integration tests that verify the expected behavior of larger components over fine-grained unit tests that lock down implementation details. While unit tests are good for adding line coverage to all of the little details—and that's important too, of course—the bigger integration tests that demonstrate expected behavior to the outside world are more valuable for managing path dependency. They test what a module does without constraining how it does it, leaving more room for internal refactoring. Yes, integration tests can be slow—a 70-minute integration test is not unusual for complex systems—but they verify the contract rather than the implementation.

Tests must also be simple to run—agents need to execute them. An anti-example is a web interface where one has to press buttons and toggle many settings in the right configuration to test the system. Tests should be invocable from the command line, with a single command, so that an AI agent can execute them as part of its workflow without human assistance.

Build systems like Bazel shine here: Bazel computes a dependency graph of changes and runs only those tests affected by a modification. So when you change one source file, Bazel figures out which tests depend on that file—directly or transitively—and runs only those tests, skipping everything else. This kind of targeted test execution is a great agility unlock, especially when AI agents are driving rapid iteration cycles.

An automatic dependency system that figures out which tests need to run based on what changed is enormously valuable, and it's the kind of infrastructure investment that pays increasing dividends as AI agents generate more code and more tests.

Documentation Is More Important

Documentation is more important in the AI age for a simple reason: LLMs are very fast readers. Humans are slow readers—I appreciate you, reader, taking the time to reach this paragraph.

LLMs are incredibly fast readers. They will consume documentation instantly and use it to produce better code. This single fact changes the economics of documentation: the return on investment for writing good documentation has increased dramatically, because a primary consumer of that documentation is now a tireless, fast-reading machine.

This starts at the source code level: comments and annotations placed as close as possible to the actual code, where both humans and AI agents will immediately find them. Code comments are more important now than they've ever been, because the AI agent reading your code will use those comments to understand intent and produce better modifications. But AI agents can also consume larger documents—design docs, architecture decision records, interface specifications—because they read fast and context windows keep growing. The documents can be quite long by now and still not fill up the context window of a modern LLM.

Consider documentation files—Architecture Decision Records (ADRs), design docs, interface specifications—for every significant code module. Not everything can be expressed as typed data structures. We also need to be able to write prose text that the LLMs understand to make the interfaces comprehensible—text that explains the semantic meaning, the why behind decisions, the intended usage patterns, and the edge cases that the type system alone can't capture. We can also use the AI agents themselves to produce these documents, with human reviewers ensuring accuracy and completeness.

Anti-example: JSON files without documentation. Hand an undocumented JSON file to an agent, and you can watch it make stochastic guesses about the schema. It will come up with new key names that might sound right but have nothing to do with the actual schema behind the JSON. It gets better with a JSON schema, but many schemas only define the structure—they don't describe the semantics of the different keys, because it's actually really hard to write semantics inside a JSON schema. Most schemas don't describe what the keys actually mean, or what the different data structures inside the JSON represent. JSON documentation is an important area for improvement, and good tools for this don't exist yet.

Anti-example: Design Docs in Google Drive Design docs as Google Docs or some other online document store are not discoverable for a coding agent. These must be converted to in-repository plain text documents closer to the source code it explains. Otherwise an AI coding agent cannot find them.

Larger Repositories

In the AI age, larger repositories will become more popular and more useful. An AI agent can act quickly across many modules within a single repository, making atomic changes across module boundaries. When an interface changes, the agent can update every module that depends on it in a single commit, ensuring consistency across the entire codebase.

Code dependencies between modules are unavoidable—code is pure dependencies, as I've argued throughout this article. But if those modules live in one repository, we can more easily change the contracts between them, because all the dependencies are fully enclosed within the repo. Agents are great at refactoring, so we can more easily reduce technical debt. When one wants to rename a concept, change a data structure, or evolve an interface, having all the dependent code in one place means the refactoring is self-contained.

Could AI agents operate across multiple repositories? Yes—the current generation of agents can already do this. But AI agents across multiple repos don't eliminate the interdependency between the modules. The code dependency between modules still exists regardless of where the code lives. If multiple modules are in one larger repository, changing contracts is simpler because the change is self-contained—you don't need to coordinate releases across repositories, manage version bumps, or worry about transient states where one repo has been updated but another hasn't.

This goes against the popular microservices paradigm, where each service gets its own repository and services define interfaces to interact with each other. But other large companies—Google being the most prominent example—have demonstrated that mono-repos work at enormous scale. They've also created better build systems with compilation caching and test caching (like the Bazel system I mentioned earlier), which enable them to do atomic changes efficiently even across millions of lines of code. Since AI agents are now so much faster at writing code, the cost of testing the interaction between different components is going up in relative terms—making the atomic change capability of mono-repos more valuable.

But a mono-repo must not become a tangled mess. Despite having everything in one repository, components must still stay neatly separated—like the organized yarn balls on the right, not the tangled mess on the left. Bigger repos will naturally tend toward the tangled state if left unchecked, which is why the quality of human code review in larger mono-repos with many dependencies must be high. The convenience of atomic changes must not become an excuse for spaghetti dependencies—you still need clear module boundaries, and reviewers must enforce those boundaries with every pull request.

Larger Deployment Packages

Connected to larger repositories are larger deployment packages. Larger packages lead to fewer dependencies during deployment. Think about what happens when one replaces a package with the next version: in theory, you have to test all of the dependencies, forward and backward, the entire chain—whether everything still works together. You do this with testing of the new version against the old one, verifying compatibility at every interface boundary.

Life gets easier with larger deployment packages—not necessarily larger in byte size, because that gets onerous to copy everywhere, but larger in scope. The key insight is that the dependencies inside these packages no longer need to be tested at deployment time, because they've already been validated by other mechanisms—build-time tests, integration tests, and all the verification that happened before the package was assembled. Only the external interfaces need verification at deployment time. The more you can internalize within a single deployment unit, the fewer cross-package dependencies you need to validate during the deployment itself.

External Users: The Hardest Problem

Once one defines an interface used by external users, you must honor it. This is the hardest problem of all, because you have a codependency to the outside world that you can no longer control. You have this dependency among repositories that you do control yourself, but once the interface is used by code that someone else maintains—code you cannot simply update with an AI agent—the constraint becomes much harder to manage.

Frankly, there are no fantastic answers for this one. It's a challenge that every large organization faces every day, and there are no silver bullets. The standard approaches include:

- Stable serialization protocols like Protobuf, where older versions can still communicate with newer ones. The protocol itself is designed for forward and backward compatibility, which is a form of stable interface evolution.

- Semantic versioning, which specifies what version changes will break the interface. Major versions break; minor versions extend. This at least communicates the intent, even if it doesn't solve the underlying problem.

- Mandatory deprecation protocols—once there's an external user, you need a clear process for retiring old interfaces. One interesting approach is time-based deprecation: "this interface will be supported for one year, then it's gone."

Some ecosystems use this in practice—they will automatically recompile dependent systems with new versions, and if those systems break, their owners are notified. This deadline-based approach forces a cadence of updates, rather than letting old interfaces accumulate indefinitely.

Anti-examples: REST APIs without deprecation deadlines. Once one publishes /v1/, you basically have to support this version forever if there's no sunset date. Without a defined timeline for retirement, interfaces become immortal burdens—you must maintain them indefinitely, consuming engineering resources that could be spent on moving the system forward. A dependency system that tracks all external consumers and enforces deprecation timelines is essentially what all of this boils down to.

5. Conclusion

We explored path dependency—a very general property of complex, dynamic systems—and saw how it manifests in keyboard layouts, healthcare systems, and most importantly, in source code. Code is pure dependencies, and those dependencies exhibit path dependency: early decisions create grooves that become increasingly difficult to escape. Every function call, every shared data structure, every interface creates a link in the dependency graph, and when those links get tangled, changing anything becomes disproportionately hard.

AI agents produce code incredibly quickly. But we have to harness them. Our role as software engineers has shifted: we now create guardrails—interfaces, contracts, tests, documentation—that guide AI agents to produce the desired code without getting tangled in path-dependent messes. We are no longer primarily writing code; we are defining the constraints and structures within which code is written.

The antidotes to path dependency in code form a toolkit for this new era:

- Modularity – define interfaces between complex subgroups of dependencies, readable by agents and checkable by automation. The typed parameter struct is the simplest building block; the compiler enforces the contract.

- Exchangeable implementations – validate interface quality through multiple implementations. If two independent implementations can interoperate against the same contract, the contract is well-defined.

- Planned interface evolution – extend interfaces without breaking dependencies. Protobuf's additive fields, named parameters with defaults, and AI-assisted required-parameter updates all reduce the accumulation of technical debt.

- Testing – more important but also more dangerous; every test adds a dependency edge. Favor integration tests that verify behavior over unit tests that lock down implementation details.

- Documentation – feed the fast readers. LLMs consume documentation instantly, and the growing context windows of modern models make even lengthy design docs accessible. Not everything can be expressed as typed data structures; sometimes we need prose.

- Larger repositories – enable atomic cross-module changes and easier refactoring. Mono-Repos with good build systems let AI agents make sweeping changes that are self-contained and fully testable.

- External dependency tracking – the hardest problem, requiring deprecation protocols, stable serialization formats, and clear timelines for interface retirement.

The goal of this article was to have you think about code in a slightly different way: as a graph of dependencies that we must keep manageable. AI agents can now produce code very quickly, but we have to harness them—and we can do this by defining the guardrails, the interfaces, the methodologies that make it easier for AI agents and for us to change the inner nodes of our systems. What generic patterns can we adopt to make our codebases less path-dependent, more amenable to the rapid iteration that AI agents make possible?

Perhaps this is the future of software engineering.